If you work at an AEC firm and want to understand what these results mean for your practice, read our companion post: What Does AEC-Bench Mean for AEC Firms?

Architecture, engineering, and construction is a $13 trillion global industry. While AI agents have reshaped software engineering workflows (with coding agents now augmenting tens of millions of developers), the built world represents the next great frontier for AI — one that has yet to be unlocked. Not because of a lack of ambition, but because of a fundamental mismatch between how AI agents perceive information and how the built world communicates.

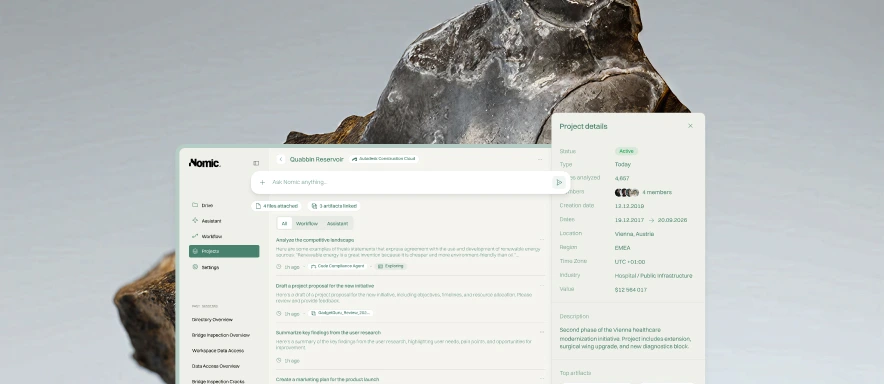

Today, we are releasing AEC-Bench — the first multimodal benchmark for evaluating AI agents on real-world architecture, engineering, and construction tasks. With 196 task instances across 9 task families, AEC-Bench provides the industry's first rigorous measurement framework for AI agent capability in construction coordination workflows. Our results reveal that domain-specific agent design — not just larger models — is the key to unlocking AI in the built world.

We openly release our benchmark dataset, agent harness, and evaluation code at github.com/nomic-ai/aec-bench under an Apache 2.0 license for full replicability.

Why the Built World Breaks AI Agents

Construction documents are among the most information-dense artifacts produced in any industry. A single drawing set can span hundreds of pages of tightly packed annotations, callouts, linework, and cross-references that require sophisticated visual reasoning to interpret. These are not digital-native documents like source code — they are 2D views exported from 3D modeling software, where meaning is conveyed through structured visual and textual elements including plans, details, callouts, notes, and title blocks.

Coordination failures between architects, engineers, and construction teams are the main drivers of scheduling delays and budget overruns in construction projects. During pre-construction, design, engineering, and handoff, many delays arise from inconsistencies introduced while authoring and revising drawing sets and project documents. In response, industry teams rely on standardized review and coordination workflows that demand deep professional experience and multimodal reasoning — exactly the kind of work AI agents should help with, but currently cannot reliably perform.

Standard coding agents — systems like Claude Code and Codex that excel at navigating source code repositories — approach construction documents with the same strategies they use for code: text extraction, keyword search, and image rendering. But construction documents are fundamentally different. Text extraction tools like pdftotext collapse spatial layout and geometric relationships into linear text, discarding the visual structure that carries critical meaning. Vision-based tools lack the precision needed for reliable geometric reasoning. The result is a systematic failure mode: agents retrieve incomplete or incorrect context, leading to compounding errors.

Our evaluation reveals just how ingrained this behavior is: across all models evaluated, 77% of agent trajectories invoked pdftotext as their primary information extraction strategy, effectively treating complex multimodal construction documents as flat text files. Codex-based agents relied entirely on Bash (100% of interactions), executing every action as a shell command. This coding-oriented tool repertoire is fundamentally mismatched with the demands of multimodal AEC document coordination.

Introducing AEC-Bench

AEC-Bench is a multimodal benchmark grounded in real-world construction coordination workflows, developed in collaboration with domain experts including practicing architects and engineers. We curated 196 task instances across 9 task families, organized into three scopes of increasing complexity based on how much document context an agent needs to complete the coordination task:

Intra-Sheet — Tasks solvable from a single page. Checking whether callouts match referenced elements, verifying detail titles, or reviewing a local assembly. The focus is on understanding relationships between text and multimodal drawing elements on one page.

Intra-Drawing — Tasks requiring reasoning across multiple sheets within the same drawing set. Validating cross-references, comparing sheet indices against title blocks, and tracing details across views. These demand navigating between pages and tracking related information across the set.

Intra-Project — Tasks involving multiple documents: drawings, specifications, and submittals. Identifying conflicts between specs and drawings, or evaluating submittals for compliance. These reflect real project-level coordination where relevant information is distributed across different documents.

Each task instance consists of a natural-language instruction, a sandboxed execution environment with real construction documents sourced from public-sector projects, and an automated verifier graded against ground truth established by professional engineers and architects.

Read the Paper

Read the Paper