We are excited to partner with Muna to release a research preview of nomic-layout — tuned and distilled for low-resource, on-device use-cases. Our series of layout models power the Parse tool calls in Nomic, enabling agents to navigate, retrieve, and make verifiable citations into large multimodal files like construction drawings.

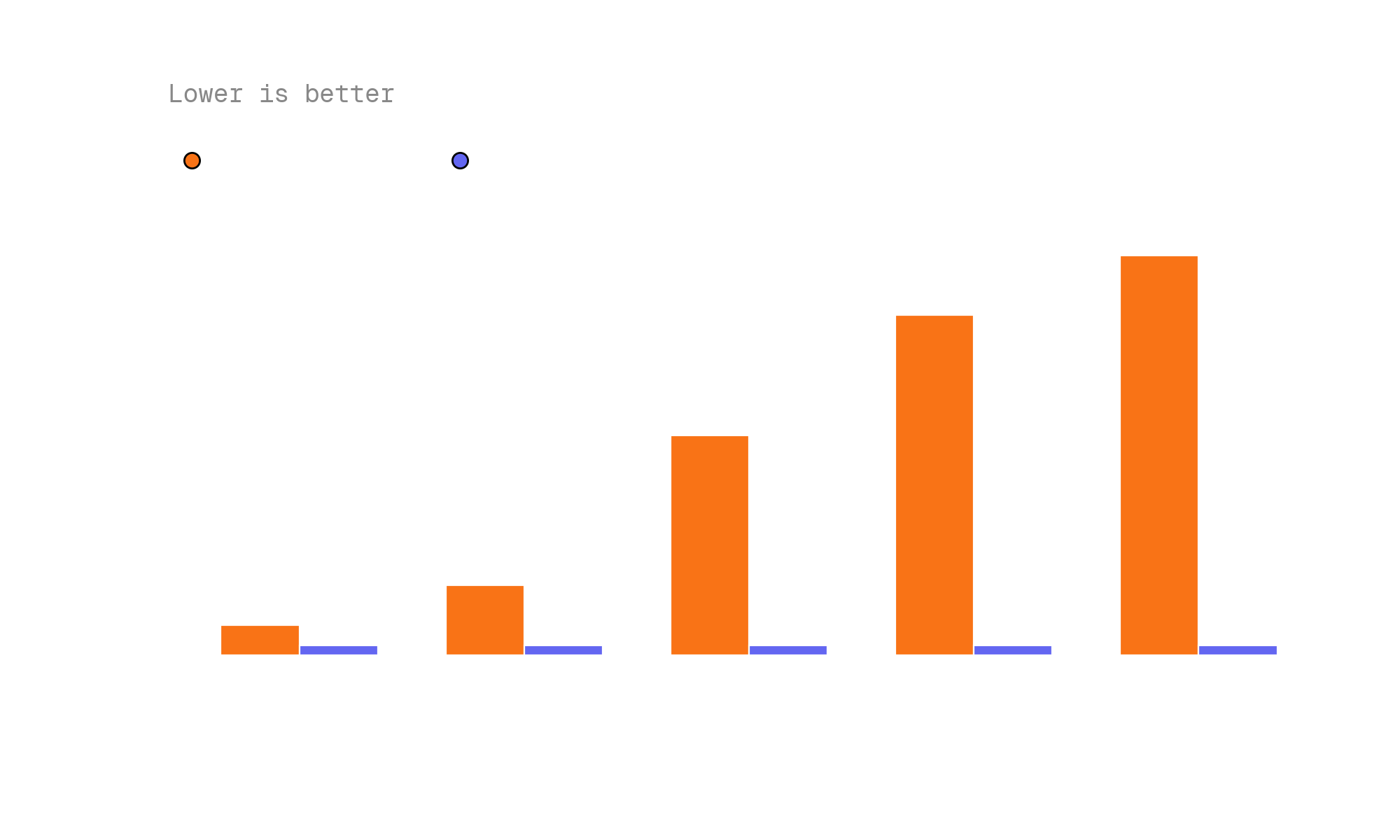

Check out Muna's companion post highlighting the release and inference benchmarks.

Muna builds an AI inference engine that specializes in allowing AI models to work on both edge consumer and server hardware. Their custom compiler stack produces platform-specific model binaries that can run — and even fit — across hundreds of unique chipsets, manufacturers, and graphics cards.

A New Frontier for On-Device Layout

Efficient inference of on-device layout models opens up a new frontier of use-cases for agents working with PDFs. Without the need for server round trips, coding agents can freely use layout models in agent skills, and browser-side capabilities merge with local model inference to unlock entirely new workflows.

When layout understanding runs locally, the constraints change. Latency drops to milliseconds. Privacy-sensitive documents never leave the device. And agents gain a persistent, always-available capability for understanding document structure — no API keys, no network dependency, no per-page costs. Local layout parsing also dramatically reduces vision token consumption — structured layout output replaces raw page images, meaning agents spend fewer tokens on document understanding and more on the actual task.

Example Applications

We worked with Muna to build a few example applications demonstrating the new use-cases that on-device layout models enable:

An in-web-browser PDF Agent. An agent that can solve tasks with multimodal PDF documents entirely in the browser. Layout understanding runs locally via Muna's inference engine, giving the agent the ability to parse, navigate, and reason over PDF structure without any server calls. This opens the door to browser-based document workflows that are fast, private, and work offline.

A coding agent skill, compatible with Cursor. A skill that allows coding agents to reliably use PDFs as part of their workflow. Instead of treating PDFs as opaque binary files, the agent can invoke local layout parsing to extract structured content — tables, figures, headings, and reading order — and use that structure for code generation, documentation tasks, or data extraction. The skill works with Cursor and other coding agent environments. Try it out at github.com/muna-ai/nomic-layout.

Why On-Device Matters

Server-hosted layout models have powered production workflows at Nomic for over a year. They work well for enterprise use-cases where throughput and accuracy on large document sets are paramount. But there is a class of use-cases where the server round trip is the bottleneck — or where it is simply not an option.

Coding agents are a prime example. When an agent needs to reference a PDF mid-task — checking a specification, reading a data sheet, or verifying a requirement — the server round trip breaks the agent's flow. With on-device layout models, PDF understanding becomes a local tool call, as fast and natural as reading a file from disk.

Browser-based applications are another. Web apps that process sensitive documents — legal contracts, medical records, financial statements — benefit from layout understanding that never sends document data to a server. On-device inference makes this possible without sacrificing the quality of document parsing.

Muna's Inference Engine

Making layout models run efficiently on diverse consumer hardware is a hard systems problem. Model architectures designed for server GPUs do not automatically translate to the heterogeneous landscape of edge devices — different instruction sets, memory hierarchies, and compute capabilities across Intel, AMD, Apple, Qualcomm, and NVIDIA hardware.

Muna's approach is a custom compiler stack that takes a model and produces optimized binaries for each target platform. The result is inference that is not just portable, but fast — taking full advantage of the specific hardware capabilities available on each device. This is what makes it practical to run layout models on the devices where agents actually operate.