As AI tools become integral to AEC workflows, security and compliance have moved from IT concerns to boardroom priorities. Architecture and engineering firms handle sensitive project data — proprietary designs, client confidential information, critical infrastructure details — and construction companies manage data that, if compromised, could have safety implications.

Yet the rush to adopt AI has sometimes outpaced the security evaluation that should accompany it. A Dodge Construction Network study found that data security concerns are among the top two obstacles to AI adoption in construction (cited by 54% of respondents). These concerns are legitimate, and they deserve rigorous answers.

Here are the questions that decision makers at AEC firms should ask when evaluating AI tools — and what the answers should look like.

1. How Is Our Data Handled?

This is the most fundamental question, and the answer matters enormously. When you upload a drawing set to an AI platform for review, what happens to that data?

What to ask: Does the AI vendor retain your data after processing? Is your data used to train their models? Where is your data stored, and who has access to it?

What to look for: Zero-retention policies — meaning your data is processed and then deleted, not stored on the vendor's servers. Explicit commitments that your data will not be used for model training. Clear documentation of data storage locations and access controls.

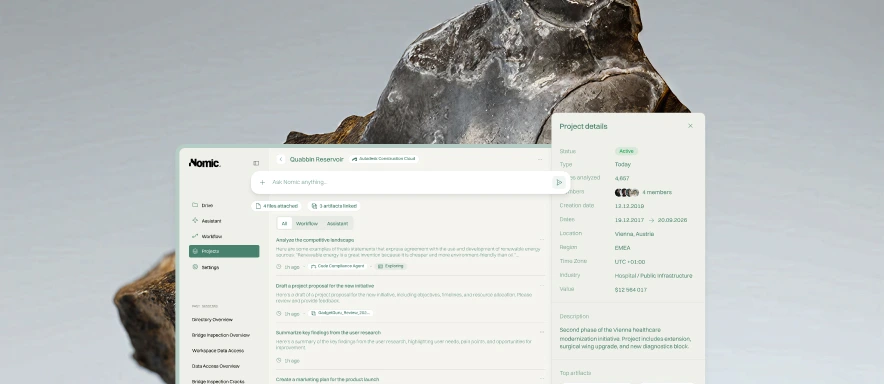

At Nomic, we operate with zero-retention policies. Your data is processed to deliver results and is not retained or used for training purposes.

2. What Compliance Standards Does the Vendor Meet?

Compliance certifications provide independent verification that a vendor's security practices meet recognized standards. They are not a guarantee of security, but they are a necessary baseline.

What to ask: Does the vendor have SOC 2 Type 2 certification? What other security certifications or audits have they completed? How often are these certifications renewed?

What to look for: SOC 2 Type 2 is the most relevant certification for SaaS vendors handling sensitive data. It covers security, availability, processing integrity, confidentiality, and privacy. Type 2 (as opposed to Type 1) means the controls have been tested over a period of time, not just at a point in time.

Nomic has completed SOC 2 Type 2 certification, with our security posture independently audited and verified. You can learn more at security.nomic.ai.

3. How Are Access Controls Managed?

AEC firms typically have complex permission structures — different team members have access to different projects, different clients have different confidentiality requirements, and different offices may have different data governance policies.

What to ask: Does the AI tool inherit existing access controls from connected data sources? Can permissions be managed at the project, team, or individual level? How are access changes propagated?

What to look for: Integration with existing identity and access management systems. Inheritance of permissions from connected platforms (SharePoint, Google Drive, etc.) rather than requiring a separate permission structure. Audit trails for data access.

4. What Happens During a Security Incident?

No system is immune to security incidents. What matters is how the vendor is prepared to respond.

What to ask: Does the vendor have a documented incident response plan? What are the notification timelines? What support is provided to affected customers?

What to look for: A formal incident response plan with defined roles, procedures, and communication protocols. Commitment to timely notification of affected customers. Post-incident analysis and remediation.

5. How Is the AI Model Itself Secured?

AI models can introduce security considerations that go beyond traditional software — including prompt injection, model extraction, and data leakage through model outputs.

What to ask: What safeguards are in place against prompt injection and other AI-specific attack vectors? Are model outputs reviewed for potential data leakage? How is the model's training data curated and secured?

What to look for: Awareness of AI-specific security risks and documented mitigations. Input validation and output filtering. Clear separation between customer data and model training data.

6. Can We Deploy On-Premise or in Our Own Cloud?

Some organizations — particularly those working on classified or highly sensitive projects — require that AI tools run within their own infrastructure.

What to ask: Does the vendor offer on-premise or private cloud deployment options? What are the infrastructure requirements? Is the same functionality available in all deployment models?

What to look for: Flexible deployment options that can accommodate different security requirements. Consistent feature parity across deployment models.

Making the Decision

Security should not be a reason to avoid AI adoption — it should be a criterion for selecting the right AI partner. The firms that get this right will have the confidence to deploy AI across their most sensitive projects, unlocking the full value of these tools without compromising their security obligations.

The right AI vendor will welcome these questions. If a vendor cannot provide clear, detailed answers to the questions above, that tells you something important about their security posture.

At Nomic, security is foundational to everything we build. Contact us to learn more about how we protect your data while delivering the benefits of domain-specific AI.