When construction firms evaluate AI tools, the conversation usually starts with capabilities: What can the AI do? Can it read drawings? Can it check codes? Can it automate takeoffs? These are important questions. But the more fundamental question — one that determines whether any AI tool will succeed in practice — is about data.

A recent Dodge Construction Network and CMiC study found that only 26% of contractors rated their data quality as "high." Concerns about data accuracy (57%) and data security (54%) topped the list of obstacles to AI adoption. This is not surprising. AI systems are only as good as the data they operate on, and the construction industry has a data problem that runs deeper than most firms realize.

The State of Construction Data

Construction data is messy for structural reasons, not just organizational ones:

Fragmentation. Project data is spread across dozens of platforms — design files in Revit, project management in Procore, documents in SharePoint, communications in email, field data in mobile apps. Each platform is a silo with its own data format, access controls, and search capabilities.

Format diversity. A single project generates data in dozens of formats: CAD files, BIM models, PDFs, scanned documents, photographs, spreadsheets, and unstructured text. Most AI tools can only process a subset of these formats, leaving significant information gaps.

Inconsistent standards. Different firms, different offices within the same firm, and even different project teams use different naming conventions, folder structures, and documentation practices. What looks like organized data at the project level often becomes chaotic when viewed across a firm's portfolio.

Legacy data. Most firms have decades of archived project data in formats ranging from paper drawings to early-generation CAD files to superseded document management systems. This historical data is often the most valuable — it represents the firm's accumulated experience — but it is also the hardest to make AI-ready.

Why It Matters for AI

AI tools in AEC typically fall into two categories: those that process individual documents (like drawing review or code compliance checking) and those that operate across a firm's data (like knowledge search or project analytics). Both are affected by data quality, but in different ways.

For document-level AI, the quality of the input documents matters. Drawings with clear annotations, consistent symbology, and standard formatting produce better AI results than drawings with hand-drawn markups, non-standard symbols, or poor scan quality. This is not a limitation unique to AI — human reviewers also struggle with low-quality documents — but AI amplifies the impact of data quality on output quality.

For firm-level AI, the organizational structure of data matters even more. A knowledge search system can only find information that it can access and index. If half of a firm's project archives are on disconnected servers, or if naming conventions are inconsistent across offices, the AI's coverage of the firm's knowledge will be incomplete.

Addressing the Challenge

The good news is that addressing data quality for AI does not require a firm to completely reorganize its data before starting. Practical approaches include:

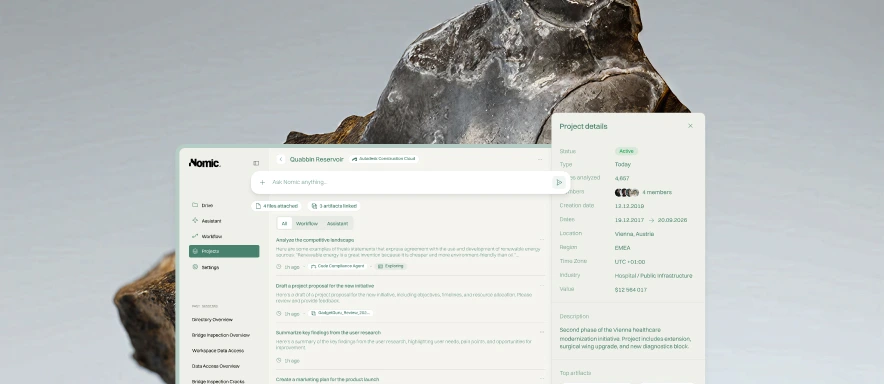

Connect to data where it lives. Rather than requiring firms to migrate all data to a single platform, effective AI systems can connect to multiple data sources — SharePoint, Google Drive, Egnyte, on-premise servers — and index content across all of them. This approach respects existing workflows and avoids the disruption of a large-scale data migration.

Inherit existing permissions. Data governance is a legitimate concern. AI systems should respect the access controls already in place, ensuring that users only see content they are authorized to access. At Nomic, we inherit existing security and access permissions from connected data sources.

Start with high-value use cases. Rather than trying to make all data AI-ready at once, firms can start with specific use cases — like searching recent project drawings or checking current submittals — that involve higher-quality, more recent data. As they see value from these initial use cases, they can expand the data scope.

Use domain-specific AI. General-purpose AI tools struggle with the format diversity and domain specificity of construction data. Domain-specific models trained on AEC content can handle the variety of document types and conventions that construction generates, reducing the data preparation burden on firms.

Moving Forward

Data quality will not be solved overnight. It is a systemic challenge rooted in the fragmented, multi-platform, multi-format reality of how construction projects operate. But it should not be a reason to delay AI adoption. The right approach is to start with tools that can work with your data as it is, while establishing practices that improve data quality over time.

The firms that treat data quality as an ongoing practice — not a prerequisite — will be the ones that realize the value of AI soonest.